Machine learning algorithms have rapidly become a regular part of our daily lives. Machine learning, a branch of artificial intelligence, is probably used for more than you might think. We’re often completely unaware that it is an algorithm involved; they have become that omnipresent. Our smartphone assistants, traffic route planning, web search results – the list goes on.

With complex and multiple interrelated algorithms finding adoption for industrial, automotive, and medical applications, there is a growing need to understand why an ML algorithm inferred a particular result. The term ‘explainable AI’ (xAI) is increasingly used to describe an algorithm result and the background factors on which the result was based.

This article by Mark Patrick, Mouser Electronics introduces xAI and why it is an essential consideration for any new machine learning application.

AI and machine learning in our daily lives

It’s hard to say when machine learning (ML) became part of our daily lives. There was no big launch, sometimes not even a mention, but ML slowly and surely became an intrinsic part of our daily interaction with technology. Our first encounter was through a smartphone assistant such as Google’s Voice or Apple’s Siri for most of us. ML quickly became a dominant function in automotive advanced driver assistance systems (ADAS) too, such as adaptive cruise control (ACC), active lane assist (ALA), and road sign identification. Then there are the many other applications that perhaps we don’t know use ML. Financial and insurance companies use ML for various document processing functions, and medical, and healthcare diagnostic systems utilise ML’s ability to detect patterns in patient MRI scans and test results.

ML has rapidly become part of our daily lives, and we have quickly become dependent on it for its ability to make quick decisions.

Understanding an algorithm’s decision-making process

Our trust and reliance on the decisions made by machine learning-based applications have recently led some consumer and professional ethics groups to raise their concerns.

To understand how ML systems determine a result probability, let’s briefly review how it works.

Machine learning uses an algorithm to mimic the decision-making process of a human brain. Neurons in our brain become replicated in a mathematically based model of our neural network to create an algorithm. Like our brain, the artificial neural network (ANN) algorithm can infer a result based on its acquired knowledge with a degree of probability. As we all do from the moment we are born, the ANN develops understanding through learning. Training an ANN is a fundamental part of any machine learning model. Also, different types of neural network models are better suited to specific tasks; for instance, a convolutional neural network (CNN) is best for image recognition and a recurrent neural network (RNN) for speech processing. The model acquires knowledge through its processing of massive amounts of training data. For a CNN, you would require tens of thousands of images of different types of animals together with their name for an animal recognition application. You need multiple images for each species and gender and pictures taken from various aspects and ambient light conditions. Once a model has been trained, the testing phase commences with test image data the model hasn’t already processed. The model can infer a result based on the probability for each test data image. Inference probability increases with more training data and a more optimised neural network.

Application developers can deploy the machine learning model once the required tasks’ inference probabilities are sufficiently high.

A simple industrial edge-based application of machine learning is for the condition monitoring of a motor by monitoring its vibration signature. You can record a detailed set of vibration signatures by adding a vibration sensor (piezo, MEMS, or digital microphone) to an industrial motor. Taking motors out of the field with known mechanical faults (worn bearings, driver problems, etc.) adds to the training data depth. The resulting model can continuously monitor a motor and provide an ongoing insight to the motor’s health. Such neural networks running on a low power microcontroller are referred to as TinyML.

What is explainable AI?

As highlighted in the last section, the output of some ML-based applications has provoked concern that the results are in some way biased. There are several aspects to the debate that ML and AI algorithms exhibit bias, and there is a widely accepted view that all ML results should be more transparent, fair, ethical, and moral. Most neural networks operate as a ‘black-box’ function; data goes in, and a result comes out – the model provides no insight into how the outcome is determined. Overall, there is a growing need for an algorithm-based decision to explain the basis of its decision. The results should be lawful and ethical hence explainable AI (xAI).

In this short article, we can only touch on the concepts behind xAI, but the reader will find the white papers by semiconductor vendor NXP, and PWC, a management consultancy, informative.

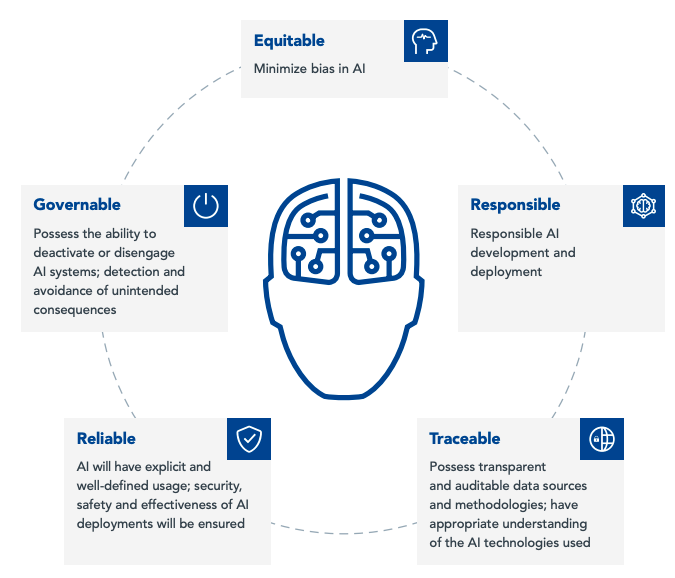

Figure 1 highlights a holistic approach proposed by NXP to developing an ethical and trustworthy AI system.

To illustrate the requirements of xAI, let’s look at two possible application scenarios.

Automotive – autonomous vehicle control: Imagine you are a passenger in a taxi with a human driver. If the driver is going slowly, you could ask, “Why are you driving so slow?” The driver could then explain that the cold conditions have made the roads icy and that they are being extremely cautious to avoid the taxi sliding out of control. However, in an autonomous taxi, you cannot ask the driver to explain their decisions. The slow speed decision probably comes from several interdependent machine learning systems (environmental, traction, etc.), all working together to infer a slow speed is prudent. Another part of the self-driving vehicle’s systems should communicate the reasons behind the decisions audibly and visually to keep the passenger informed and reassured along the journey.

Healthcare – patient condition diagnosis: Consider an automated system to speed the identification of different types of skin conditions. A photograph of a patient’s skin anomaly is input to the application, and the output is passed to a dermatologist to propose a treatment. There are many different types of human skin disorders. Some are temporary, others permanent, some are also painful. The severity of skin conditions ranges from relatively minor to life-threatening. Because of the range of possible disorders, the dermatologist might feel that further analysis is required before recommending a course of treatment. If the AI application could show the probability of its diagnosis and other high-ranking results inferred, the specialist could make a more informed decision.

The two simple scenarios outlined above illustrate why xAI needs serious consideration. There are many more ethical and social dilemmas to consider too in using AI and ML for organisations involved in financial services and governance.

When designing machine learning systems, here are a few points that embedded developers should review.

- Does the training data represent a sufficiently broad and diverse representation of the item to be inferred?

- Has the test data been proved to have all identified classification groups equally represented in sufficient quantities?

- Does the algorithm-inferred result require an explanation?

- Can the neural network provide an answer based on the probabilities of outcomes it has excluded?

- Do legal or regulatory constraints cover the ML application processing data?

- Is the ML application secure from adversaries compromising it?

- Can the ML application be trusted?

Developing machine learning applications

Many embedded developers now work on projects that involve a machine learning function, such as the TinyML example highlighted in the introductory section. However, ML is not limited to edge-based platforms; the concepts are highly scalable to large industrial deployments. Example industrial deployments of ML include machine vision, condition monitoring, safety, and security.

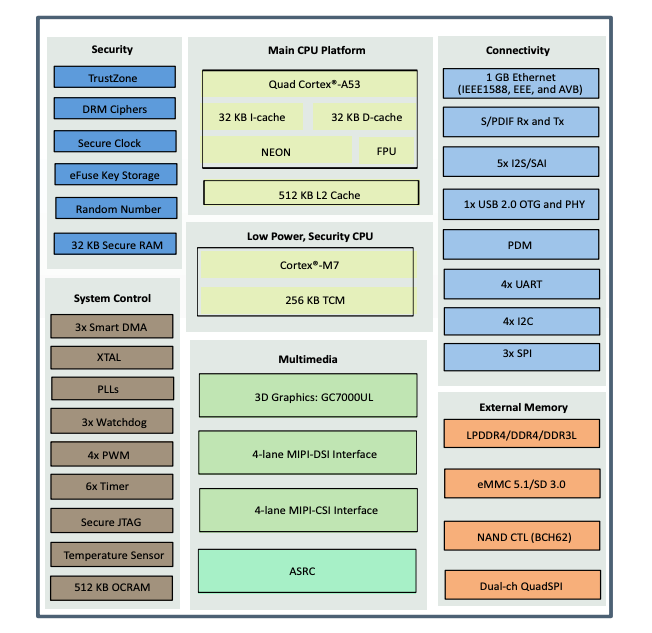

Semiconductor vendors now offer microcontrollers and microprocessors optimised for machine learning applications. An example is the NXP iMX-8M Nano-Ultralite application processors. Part of the NXP iMX-8M Plus series, the Nano-Ultralite (NanoUL) is equipped with a primary quad Arm Cortex-A53 core, operating at speeds up to 1.5GHz and a general-purpose Cortex-M7 running up to 750MHz core processor for real-time and low-power tasks.

Figure 2 highlights the major functional blocks of the iMX-8M NanoUL, which includes a comprehensive set of connectivity, peripheral interfaces, security functions, clocks, timers, watchdogs, and PWM blocks. The compact NanoUL application processor measures 11mm x 11mm.

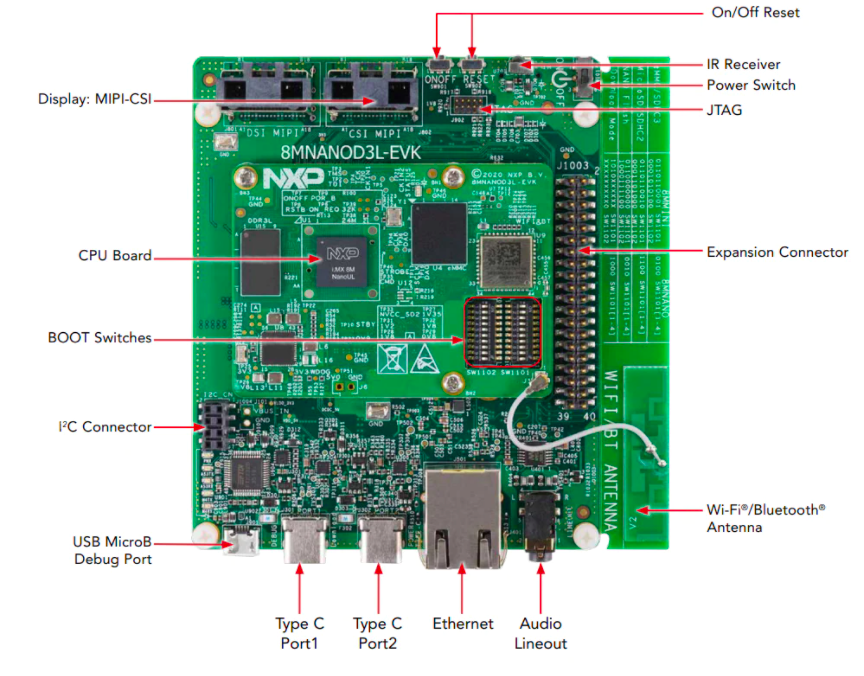

To assist developers design iMX-8 Plus NanoUL applications, NXP offers the i.MX 8M Nano UltraLite Evaluation Kit – see Figure 3. Comprising a baseboard and a NanoUL processor board, the kit provides a comprehensive and complete platform on which to develop a machine learning application.

There is already an established ecosystem of machine learning resources, frameworks, and development platforms to prototype an ML design, whether based on a low power edge MCU or a powerful MPU.

TensorFlow Lite is a variant of Google’s TensorFlow enterprise-grade open-source ML framework explicitly designed for low power, low resource microcontrollers. It can run on the Arm Cortex-M series cores and occupies just 18kB of memory. TensorFlow Lite provides all the resources for model deployment on embedded devices.

Edge Impulse takes a more inclusive approach with an end-to-end solution offering that covers ingesting training data, selecting the optimal neural network model for the application, testing, and final deployment to an edge device. Edge Impulse works with open-source ML frameworks TensorFlow and Keras.

Explainable AI advances

Learning how to design and develop embedded ML applications offers significant opportunities for embedded systems engineers to advance their skills. When contemplating the specification and operation of the end application, it is also the perfect time to consider how the principle of explainable AI applies to the design. Explainable AI is changing the way we think about machine learning, and an embedded developer can make a significant contribution by introducing more context, confidence, and trust into an application.

Further reading on explainable AI can be found on our sister title, Electronic Specifier:

Understanding complexity – the role of explainable AI for engineers