This piece from EBV answers the question: when prototyping machine vision applications, what are some of the factors that developers must keep in mind to deploy the right systems?

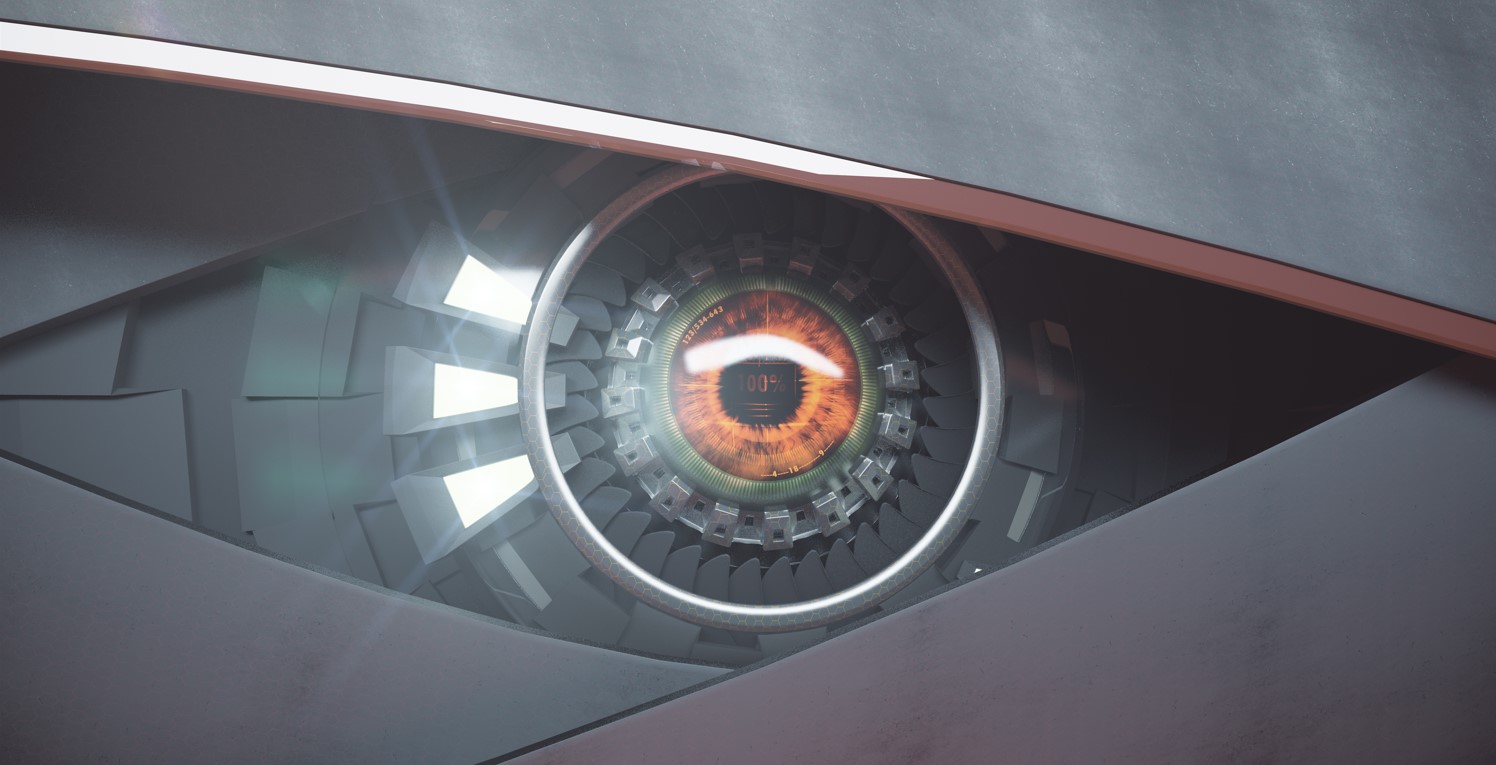

A machine vision (MV) function adds the ability for a system to use cameras, light sensors, and analogue to digital converters to electronically create a digital representation of objects and the surrounding environment. The basic concepts of machine vision have been employed in a broad range of markets, from healthcare to defence and aerospace to industrial automation for decades.

Significant opportunities

With the continued evolution of 3D sensor technologies, the considerable cost reductions of CMOS image sensors, increasingly compact sensor packaging and the availability of high-performance processors, the machine vision market is experiencing dramatic growth.

Grandview Research, a technology market research company, predicts the MV market globally will experience a compound annual growth (CAGR) of 7.7 per cent and reach US$ 18.25 billion by 2025.

Machine vision markets and applications include:

- Industrial process and manufacturing automation: visual inspection, diagnostics, assembly, mobile robots, digital production, service robots, rescue robots

- Smart transport systems: traffic monitoring, autonomous vehicles, advanced driver assistance systems (ADAS)

- Safety, Security, and law enforcement: security surveillance, camera networks, remote sensing underwater and in rugged surroundings

- Life sciences and consumer: agriculture, forestry, fisheries, construction and civil engineering, retail, sport, fashion, homewares etc.

- Content curation applications: archiving/restoration, document management

- Multimedia: virtual reality (VR)/mixed reality (MR)/ augmented reality (AR), entertainment

- Biomedicine: tomography, endoscopy, computer-aided diagnostics, computer-aided surgery, computer-aided anatomy, bioinformatics, nursing, genomics

- Human to machine interfaces (HMIs): facial/gesture/behavioural/gait/eye-tracking analyses, biometrics, wearable computing, first-person vision (FPV) systems, advertising.

According to research company Gartner, the machine vision market has significant potential. A recent research report highlighted some key findings for 2025:

- MV-enabled ADAS applications in vehicles will rise from 10% to 35%.

- Retailers will experience a 20% increase in customer traffic through MV deployment and a 10% profit margin uplift thanks to better advertising campaign targeting.

- The top five consumer electronics brands will incorporate MV features into 20% of smart home appliances.

- Machine vision will deliver facial recognition and gesture detection as a default authentication mechanism for all premium smartphones and 30% of basic smartphones.

The top challenges of machine vision development

A good starting point for any machine vision development is engaging and partnering with a reliable technology provider. Critical decisions include selecting the optimal hardware, the most appropriate and dependable sensor interface standards, and a relevant and well-proven neural network machine learning algorithm.

Collaborating with a technology partner who has delivered multiple MV projects will benefit from their subject matter expertise and knowledge, particularly during the critical implementation phase.

Of course, to achieve such success, there is a vital criteria of machine vision implementation that must be considered. The following subsections discuss some key requirements for successful MV development.

The securement of data quality

Developers must access high-quality data before commencing an AI development. The success of any machine learning MV application depends on capturing, processing, analysing, and recognising images. The initial object recognition phase uses groups of images to train the machine learning network.

The higher the quality of the training data, the higher the probability that the algorithm correctly identifies an image, and consequently the MV system as well. Poor image quality or insufficient training data reduces the chances of correct object detection and impacts the success of the MV application. The reliable operation of a machine vision system will be compromised if the AI algorithm hasn’t been trained with enough high-quality training data.

The setting of realistic goals and expectations

The human brain is probably the best example of a multi-tasking machine: it is able to process inputs from all five senses simultaneously and instantly respond to them. However, machines have not mastered similar capabilities. If an AI system is expected to perform too many tasks, it will encounter processing problems. Developers need to focus on the features and capabilities considered vital to the application to avoid such challenges. Incorporating too many different tasks in the initial application will typically result in poor MV operation.

The use of an AI framework

AI developers rely on their knowledge of frameworks and programming languages to design successful MV applications. Frameworks add structure to an AI project by templating the development process and expediting an AI application’s training, testing, and deployment. They are available for a broad range of machine learning, deep learning, neural networks, and NLP (natural language processing) tasks.

A variety of programming languages and frameworks are available for AI applications, and each has its own strengths. They include Pytorch, TensorFlow Caffe, Keras, Python, C++, Java, Lisp and varies Model Zoos.

To assist developers in selecting which framework and neural network is best for their application, EBV offers access to its team of machine learning and AI experts. The EBV team can also explain the differences between the various vendors’ solutions for AI integration and which hardware is particularly well-suited to a specific use case.

Another decision that members of the MV design team should consider is whether they will use an external programming resource or have an internal development capability that is available for the project’s duration. Other aspects to consider include auditing the programmer’s skills and qualifications, which development tools to use, and how updates will be distributed.

Processor considerations

There are several hardware options for AI-based MV applications ranging from FPGAs and GPUs to CPUs and combinations with external accelerator circuits.

Field-programmable gate arrays provide a high-performance configurable compute capability that can meet the requirements of almost any application. Such FPGAs are equipped with a specialised programmable architecture that suits complex neural network machine learning applications. FPGAs offer the ability to achieve performance gains, a lower price point, and higher efficiency compared to GPUs and CPUs.

GPUs, or graphics processing units, are specialised compute devices that are optimised for tackling image and video processing. Compared to CPUs, they feature less complex compute elements in larger quantities. GPUs are ideally suited to processing large volumes of data, such as image pixels or video codecs, in parallel. One aspect of GPUs is that they consume considerably more power than a CPU. Also, mainstream programming languages are typically not supported, limiting developers to using specialised tools such as CUDA and OpenCL.

CPUs, or central processing units, are extremely capable compute devices; however, having them process the large amounts of data required by a neural network may restrict them to being applicable to relatively small systems. That said, with their ability to support a wide range of programming languages and integrated development environments, application development can be achieved at speed.

Other compute hardware selection considerations include the power consumption profile of the chosen device, number of peripheral interfaces, and the likely costs.

The development team should carefully consider whether the processor, memory, and associated devices offer sufficient compute and storage resources across the lifecycle of the machine vision system to accommodate enhancements and firmware updates.

The MV use case has a significant impact on the performance characteristics. Some AI applications (for example, condition monitoring) are less power-hungry compared to processing and scaling high-frame-rate video streams. An application like facial recognition typically has a low frame rate and would suit using a CPU with or without hardware acceleration. Dedicated hardware accelerators, FPGAs, GPUs, and powerful CPUs can accommodate running low latency, high-frame-rate neural networks.

A popular measure of a computing device’s performance capability is TOPS (tera operations per second) that gives only an indication.

Further machine vision considerations

Sensors and object illumination

Significant advances in CMOS sensor technology for FSI (frontside illumination) and BSI (backside illumination) provide images with a higher resolution, even in low light conditions. Adequately lighting the object detection area is an essential aspect of any MV application and determines the overall accuracy and efficiency of an image processing application.

Lighting quality involves the measurement of three main characteristics of an image sensor: quantum efficiency (QE), dark current, and saturation capacity. Quantum efficiency indicates the proportion of collected photons that are converted to electrons. Quantum efficiency is a function of wavelength and is specified according to the operating wavelength and provides an accurate measurement of the sensor’s light sensitivity. Also, if a sensor is installed in a camera, the optical path lowers the maximum quantum efficiency of the camera.

MV developers should take the dark current and saturation capacity into account when they are designing image processing systems. Dark current measures the fluctuations of electrons generated thermally within the CMOS image sensor and can cause noise. The saturation capacity of a sensor denotes the number of electrons that can be stored in a single pixel.

Although camera manufacturers do not usually include these parameters in their datasheets, this information can be used together with the QE measurements to determine the maximum signal-to-noise ratio, the absolute sensitivity, and the dynamics of an application.

Review the image background

An MV object detection application works best when the image background differs from the observed object. For example, it is possible to have a security system that cannot distinguish between an object and a person standing behind it due to a lack of contrast.

In an industrial setting, any reflective surfaces present in the image may confuse the image recognition algorithm resulting in detection errors. These difficulties can be overcome with the use of coordinated algorithms in that they use various wavelengths on the electromagnetic spectrum, such as infrared and adaptive lighting.

Of course, there are other crucial considerations in terms of the objects being observed. Particularly, this is regarding the nature of those objects: object positioning, object scaling, and the actions and movements of objects, and more factors are also vital. To approach one such example, consider object scaling: if a human brain views an object from different distances, the observer can determine that it is the same object.

However, an MV system might not infer the same result. Consequently, a multifaceted data set for training and accurate testing can ensure that the distance of an object is no problem when the MV system is trying to recognise it. The choice of lens and focal length also directly influence the application’s performance. Most MV systems work with pixel values, but scaling aspects are just as important in applications with moving images.

Innovation and good decision-making are key

Technology innovation and the continued adoption of image processing into a wide range of applications has driven the market to increase by $10 billion over the past five years. Advances in image sensor technologies, machine learning algorithms, AI frameworks and computational devices have also contributed to the market’s significant growth.

It is no wonder that developers of machine vision systems find it challenging to keep up to date with the most recent innovations and resources. However, by choosing the right technology partner, MV developers such as EBV can ultimately mitigate potential risks, increase design efficiency, and achieve commercial success.